Official statement

What you need to understand

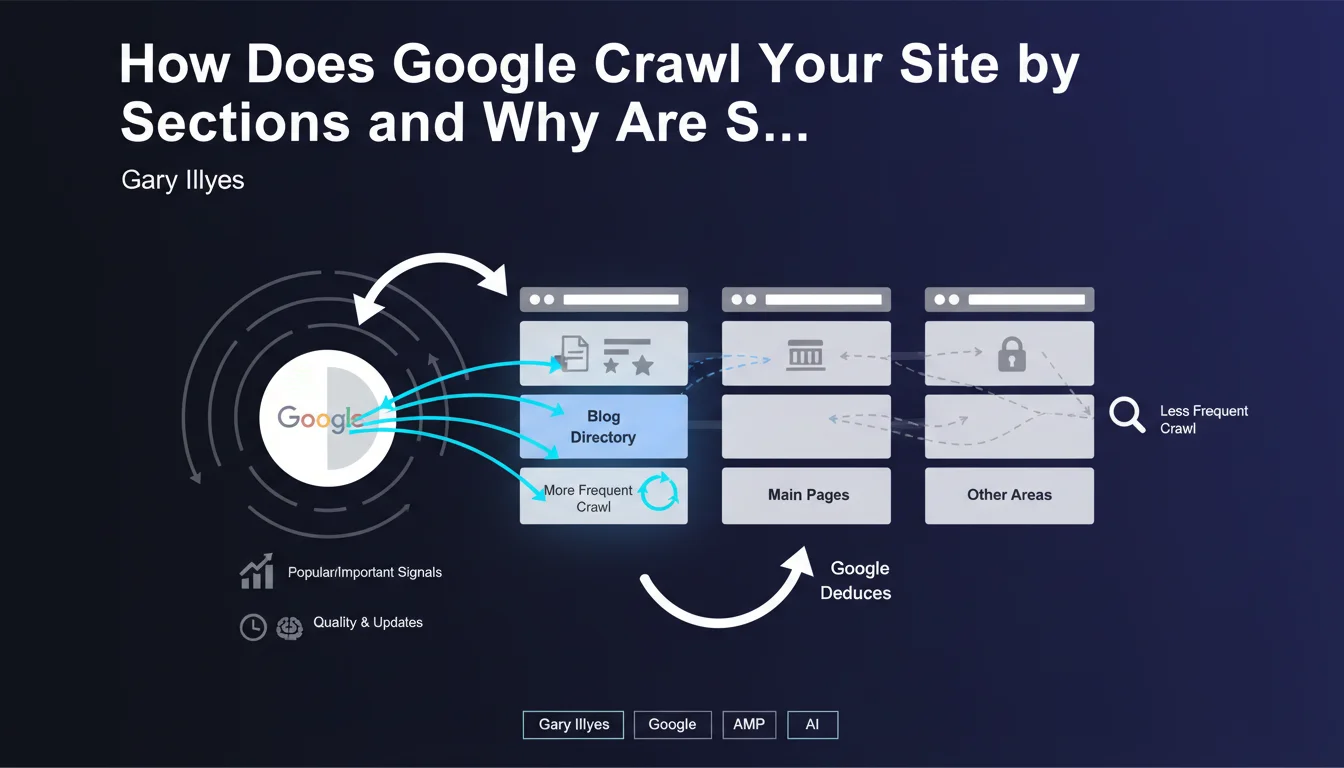

What Is Selective Crawling by Site Sections?

Google no longer treats a website as a monolithic entity. The search engine now analyzes different sections of a site independently to optimize its crawl resources.

Concretely, Googlebot identifies high-value areas (blog, news, product categories) and adjusts its exploration frequency based on quality and popularity signals. A dynamic and popular section will be visited daily, while a static archive will only be explored monthly.

What Signals Determine the Crawl Frequency of a Section?

Google combines several indicators to assess the importance of a section. The frequency of content updates constitutes a first signal, but it's not the only decisive criterion.

External popularity signals play a major role: backlinks pointing to this section, social media mentions, user traffic. If a /blog/ section regularly receives inbound links and generates engagement, Google understands it deserves special attention.

Why Does This Approach Change the Game for SEO Professionals?

This revelation explains many behaviors observed in Google Search Console. The statuses "Crawled - currently not indexed" or "Discovered - currently not crawled" directly reflect these crawl priorities.

- Crawl budget is no longer uniform across the entire site but distributed by sections

- Sections deemed of low quality or unpopular will be marginalized in the crawl

- Site architecture and directory organization directly impact visibility

- Google can permanently ignore certain areas if they don't generate positive signals

SEO Expert opinion

Is This Statement Consistent with Field Observations?

Absolutely. This revelation from Gary Illyes confirms what we've been observing for several years on large-scale sites. E-commerce sites with thousands of product pages notice that certain categories are explored daily while others are neglected for weeks.

Media sites with multiple thematic sections also see significant disparities in crawling. A hot news section will be crawled every hour, while a dormant historical section will only be visited sporadically, even if technically accessible.

What Important Nuances Should Be Added to This Statement?

Caution: this logic doesn't apply in a binary way. Google doesn't completely abandon a section, it reduces its priority. An important page in a poorly crawled area can still be indexed if it receives direct links or traffic.

Furthermore, technical structure remains fundamental. A popular section that's poorly linked internally, with prohibitive loading times or repeated server errors, won't benefit from privileged crawling despite its theoretical popularity.

In Which Cases Can This Logic Penalize a Site?

Sites with a flat architecture where all content appears at the same level risk losing Google's attention on their strategic content. Without clear segmentation, the search engine cannot prioritize effectively.

Sites that mix quality content and low-value automated pages in the same directories create algorithmic confusion. Google may then reduce crawling of the entire section, penalizing even good pages.

Practical impact and recommendations

How Can You Optimize Your Site Architecture to Benefit from This Mechanism?

Clearly segment your site into coherent thematic directories. Isolate your high-value content (blog, guides, news) in dedicated sections, separate from utility or administrative pages.

Create a logical URL hierarchy that reflects the strategic importance of each section. Avoid burying your best content among technical pages, terms of service, or dormant archives in a confusing structure.

What Concrete Actions Send the Right Signals to Google?

Focus your link building efforts on strategic sections you want crawled frequently. Obtain quality backlinks pointing directly to these priority directories, not just to the homepage.

Generate user engagement on these sections: social shares, comments, high reading time. These behavioral signals reinforce the message that this area deserves special attention from Googlebot.

- Audit your Search Console to identify under-crawled sections with potential

- Restructure your URLs to isolate premium content in dedicated directories

- Optimize internal linking to push link equity toward strategic sections

- Implement a regular editorial strategy on sections to prioritize

- Obtain thematic backlinks to your priority directories

- Delete or isolate (robots.txt, noindex) low-value sections that dilute crawl

- Monitor crawl reports by directory in Analytics and GSC

- Test the impact of changes with temporal tracking of indexing statuses

How Do You Measure the Effectiveness of Your Section-Based Crawl Strategy?

Use Google Search Console to analyze crawl statistics by directory. Identify changes in crawl frequency after your optimizations and correlate them with organic traffic performance.

Create custom segments in your analytics tools to specifically track strategic sections. Measure the evolution of indexed pages, organic traffic, and average positions by directory over several months.

💬 Comments (0)

Be the first to comment.