Official statement

What you need to understand

What is Google's official position on the impact of problematic pages?

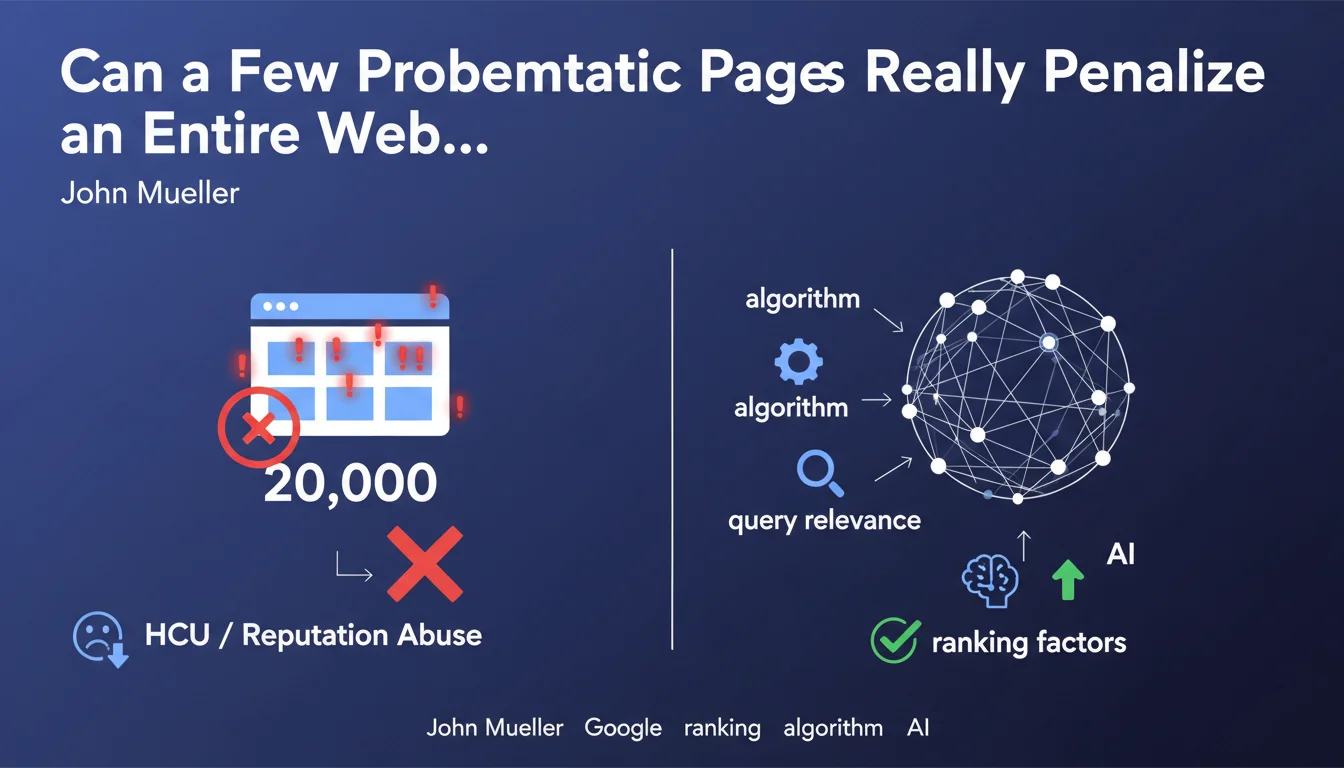

John Mueller clarified a crucial point on Reddit: a handful of problematic pages is generally not enough to penalize an entire site. Specifically, 10 pages affected by the Helpful Content Update (HCU) or reputation abuse on a 20,000-page site should not trigger a global ranking drop.

This statement puts Google's algorithmic penalization mechanisms into perspective. The search engine evaluates quality proportionally and contextually, rather than brutally penalizing an entire site for a few deficient pieces of content.

What are the real factors behind a ranking drop?

Mueller emphasizes the need to avoid hasty conclusions. A visibility decline can result from multiple causes that have nothing to do with isolated problematic pages.

Fluctuations can be explained by continuous algorithmic evolutions, changes in user search intent, or better competitor performance. The correlation between an update and a drop does not systematically imply a direct causality.

Why is it difficult to identify the exact cause of traffic loss?

Google's algorithm integrates hundreds of signals that interact in complex ways. A drop can even occur without any direct link to a recent update, making diagnosis particularly tricky.

The evolving relevance of queries also plays a major role. What worked yesterday may become less relevant today if search intent or the competitive landscape evolves.

- 10 problematic pages out of 20,000 generally do not cause a global penalty

- Ranking drops often result from multiple combined factors

- Evolution of search intent can explain position losses

- Algorithmic changes are continuous and progressive

- Temporal correlation does not mean cause-and-effect relationship

SEO Expert opinion

Is this statement consistent with field observations?

My 15 years of experience largely confirm this position. I have observed numerous sites with entire sections of weak content maintain excellent performance on their main pages. Google indeed applies a granular and proportional evaluation.

However, there is an important nuance: if these problematic pages represent your dominant editorial model or affect your strategic pages, the impact can be much more significant. The ratio of problematic pages to total pages matters, but so does their position in the site architecture.

In which specific cases should this general rule be nuanced?

Mueller's statement applies to isolated and proportionally minor cases. But certain situations create disproportionate effects that warrant vigilance.

Problematic pages located high in the hierarchy, hub pages with strong internal authority, or those generating massive traffic can affect the overall perception of the site. Similarly, a repetitive pattern even across few pages can signal a systemic problem to the algorithm.

What is the real lesson to learn from this clarification?

Mueller invites us to adopt a rigorous diagnostic approach rather than knee-jerk reactions. Too many SEOs systematically attribute their problems to the latest Google update, when the causes often lie elsewhere.

This statement underscores the importance of a multifactorial analysis: evolution of the competitive landscape, content obsolescence, emerging technical issues, or simply better competitor responses to search intents. Investigation methodology takes precedence over quick conclusions.

Practical impact and recommendations

How should you properly diagnose a ranking drop?

Before panicking or questioning everything, adopt a structured analysis methodology. Start by segmenting your data to identify whether the drop is global or localized to certain page types.

Analyze Search Console data by page groups, by search intent, and by time period. Compare with your competitors' evolution on the same queries to distinguish what relates to your site versus general SERP evolutions.

- Segment analysis by page types and search intents

- Compare your performance with that of direct competitors

- Check technical changes that occurred during the relevant period

- Examine the evolution of content freshness versus competition

- Analyze whether search intent has evolved for your key queries

- Identify correlations with documented algorithmic updates

What interpretation errors should you absolutely avoid?

The most common mistake is to overreact to normal fluctuations. Google rankings fluctuate daily within a certain range that does not justify any particular action.

Also avoid massively modifying your site following a drop, without having identified the real cause. These hasty changes often create more problems than they solve, and make it impossible to identify the true source of the initial problem.

What should you do concretely when facing traffic loss?

Start with a comprehensive technical audit to eliminate obvious causes: loading times, crawl errors, indexation issues. These elements are measurable and quickly correctable.

Then, evaluate the comparative quality of your content versus the pages that have overtaken you. Competitive analysis often reveals that your content has become outdated or less comprehensive than newly ranked pages.

- Conduct a thorough technical audit (crawl, indexation, performance)

- Compare your content to newly ranked pages

- Check the freshness and currency of your strategic content

- Analyze the evolution of user engagement signals

- Examine your inbound link profile to detect potential losses

- Test different optimizations on page samples before global deployment

💬 Comments (0)

Be the first to comment.