Official statement

<a href="/good-link">Followed link</a>

<a href="/good-link" onclick="changePage('good-link')">Followed link</a>

These, however, will not be followed:

<span onclick="changePage('bad-link')">Unfollowed link</span>

<a onclick="changePage('bad-link')">Unfollowed link</a>

In short, if a link's URL is generated by JavaScript for example, that link will not be followed. The URL must be explicitly present in the href attribute for the link to be understood and followed by Googlebot.

What you need to understand

Which Links Does Googlebot Actually Follow?

Google clarified its position during a technical conference: Googlebot only follows links whose URL is explicitly present in the href attribute. A standard link with an <a href="/page"> tag will always be crawled and taken into account.

On the other hand, links dynamically generated by JavaScript without an href attribute (such as <span onclick="changePage()"> or <a onclick="..."> without href) are not followed. This technical distinction remains fundamental for page discoverability.

Why Does This Technical Limitation Still Persist Today?

Despite advances in Google's Web Rendering Service, link analysis remains based on the initial DOM rather than on complete JavaScript execution. This approach allows Google to efficiently crawl billions of pages without overwhelming its resources.

The more technically complex a link's construction, the higher the risk that it won't be detected. Google prioritizes simplicity and robustness over exhaustive analysis of all possible JavaScript patterns.

What Are the Implications for Modern Site Architecture?

This directive imposes a major structural constraint: all critical navigation links must exist in static HTML. Single-page applications (SPAs) or JavaScript-heavy sites must therefore implement a hybrid strategy.

- Href attribute mandatory for all links that need to be crawled

- Onclick events can coexist with href without any problem

- Non-

<a>elements (span, div, button) are never considered as links - JavaScript can enhance behavior but must not replace the basic HTML structure

- This rule applies regardless of JavaScript rendering by Googlebot

SEO Expert opinion

Is This Statement Consistent with Field Observations?

Yes, absolutely. My audits systematically confirm that links without href transmit no PageRank and do not enable the discovery of new pages. Tests with Search Console show that these pages remain unindexed even after months.

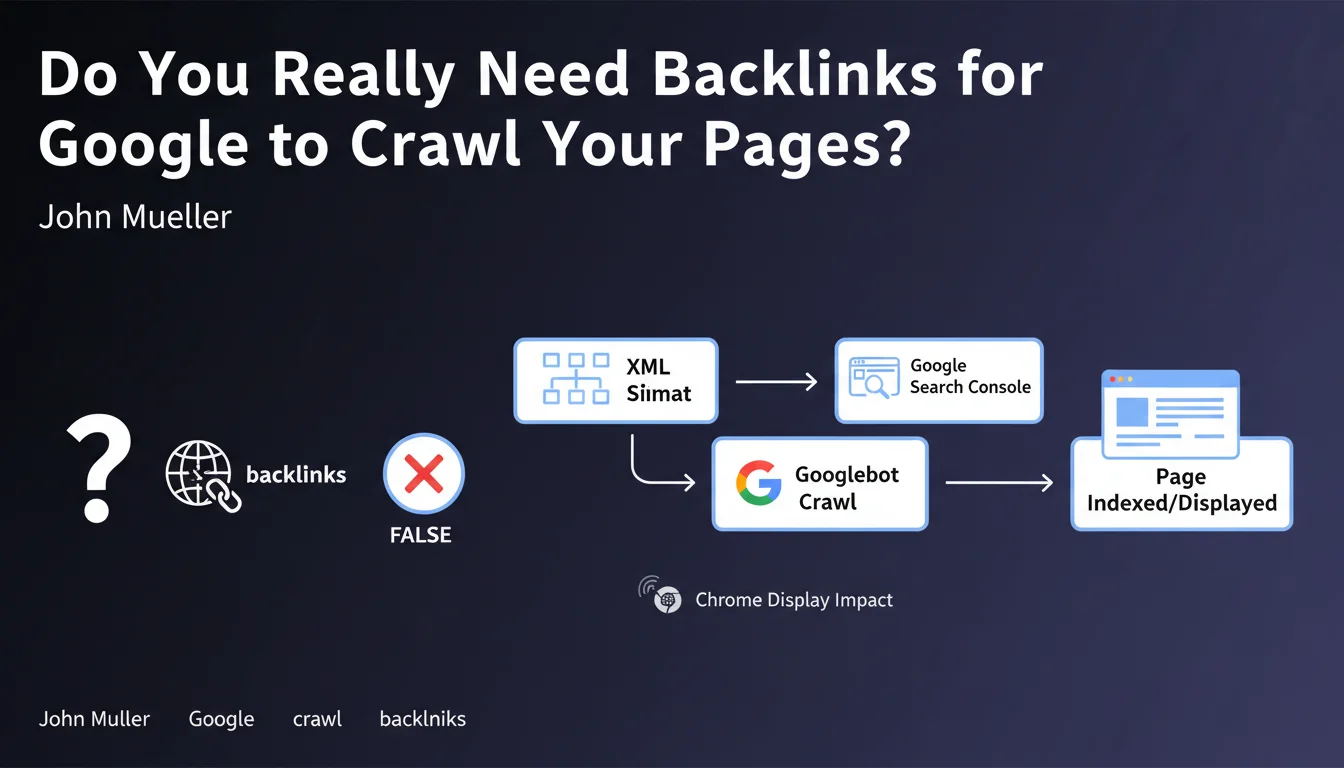

However, there's an important nuance: Google can sometimes discover URLs through other means (sitemaps, external backlinks, Chrome browsing history) and index them despite the absence of crawlable links. But this never compensates for the lack of structured internal linking.

Why Does Google Maintain This Seemingly Conservative Approach?

The reason is twofold. First, scalability: analyzing the JavaScript of every page costs infinitely more resources than parsing static HTML. Second, reliability: HTML remains the universal foundation of the web, independent of frameworks and versions.

This approach also allows Google to maintain the quality of its index. An explicit HTML link is a clear intentional signal, while a dynamically generated link might result from a bug or unintended manipulation.

In Which Cases Does This Rule Cause the Most Problems?

React, Vue, or Angular applications in SPA mode are the most impacted. Without Server-Side Rendering (SSR) or pre-rendering, their navigation relies entirely on JavaScript, creating an internal linking structure invisible to Googlebot.

E-commerce sites with dynamically generated filters and facets also encounter this problem. If pagination or category links don't have a valid href attribute, entire sections of the catalog can become inaccessible to crawling.

Practical impact and recommendations

How Can You Quickly Audit Your Site's Links?

Use Google Search Console to identify orphan pages: if important URLs don't appear in coverage reports even though they're in your sitemap, it's often a link problem. Then crawl your site with Screaming Frog with JavaScript disabled: only static HTML links will appear.

Manually inspect your critical templates using Chrome DevTools. Look for clickable elements: if they're not <a> tags with a valid href attribute, they're invisible to Googlebot.

Which Technical Fixes Should You Prioritize?

Start by converting all main navigation elements (menus, footer, breadcrumb) into real HTML links. This is the most critical task because it impacts the entire site. Make sure every <a> has an href even if JavaScript handles the click.

For modern JavaScript applications, implement SSR (Next.js, Nuxt.js) or pre-rendering (Prerender.io, Rendertron). These solutions generate static HTML with valid links while preserving the dynamic user experience.

- Audit all templates with manual inspection of raw HTML source code

- Verify that 100% of navigation links use <a href="..."> tags

- Test the site with JavaScript disabled to identify inaccessible areas

- Implement Server-Side Rendering for critical SPA applications

- Add HTML links as fallback even when JavaScript handles navigation

- Regularly monitor orphan pages in Search Console

- Train developers on HTML best practices for SEO

- Document coding standards including the requirement for valid hrefs

Should You Systematically Overhaul Your Site's Technical Architecture?

Not necessarily. If your site is already well crawled and indexed, focus on new features to avoid repeating these mistakes. However, if you're experiencing indexation issues or deficient internal linking, a technical overhaul may be necessary.

This overhaul often involves complex trade-offs between performance, user experience, and SEO. Analyzing existing code, identifying problematic patterns, and implementing solutions like SSR require advanced technical expertise.

💬 Comments (0)

Be the first to comment.