Official statement

What you need to understand

What is keyword stuffing and why is this statement important?

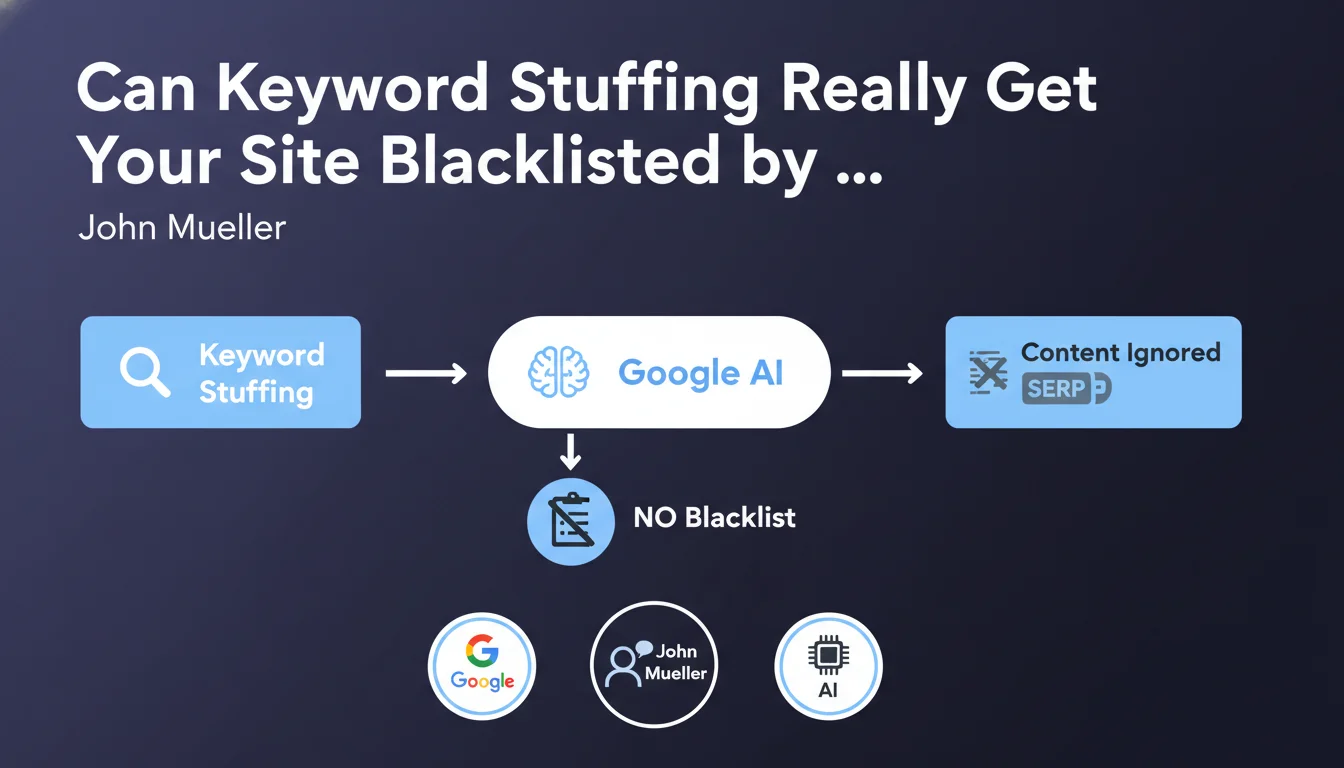

Keyword stuffing refers to the practice of excessively repeating keywords in content to attempt to manipulate rankings. This outdated SEO technique was popular in the 2000s, when Google's algorithms were less sophisticated.

John Mueller clarifies a major misconception here: this practice does not lead to complete removal of a site from Google's index. The algorithm has evolved to the point of simply ignoring these excessive repetitions rather than imposing drastic penalties.

How does Google actually handle keyword stuffing today?

Rather than penalizing or blacklisting, Google now uses a neutralization approach. The algorithms detect abnormal repetitions and simply ignore them when calculating relevance.

This means that keyword stuffing provides no benefit, but it also doesn't trigger severe manual action. The affected content simply loses effectiveness without compromising the entire site.

What are the actual conditions for a Google blacklist?

Complete removal of a site from Google's index is extremely rare and reserved for the most serious cases. This typically involves sites practicing hacking, phishing, or massively distributing malware.

Pure manipulation techniques such as massive artificial link networks, aggressive cloaking, or large-scale scraping can also lead to severe penalties, but even in these cases, total blacklisting remains the exception.

- Keyword stuffing is detected and ignored by Google, not severely penalized

- Complete blacklisting is reserved for major violations (malware, phishing, hacking)

- The modern algorithm favors neutralization over punishment

- Excessive repetitions have provided no SEO advantage for many years

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. In 15 years of practice, I've observed that Google has progressively abandoned binary penalties (penalty/no penalty) in favor of a more nuanced approach. Sites with keyword stuffing don't disappear, they simply stagnate.

Natural language processing algorithms (BERT, MUM) have significantly improved Google's ability to understand context and intent. They instantly detect artificial content and strip it of all value without requiring manual action.

What important nuances should be added to this statement?

While keyword stuffing alone won't blacklist a site, it can nevertheless contribute to a negative overall quality assessment. A site multiplying bad practices risks being classified as "low quality" by the algorithm.

Additionally, certain forms of keyword stuffing can be perceived as pure spam when combined with other techniques. For example, automatically generated pages with keyword stuffing and artificial links can cross the tolerance threshold.

In what contexts does this technique remain particularly counterproductive?

Keyword stuffing is particularly damaging on transactional or commercial pages. Users are looking for clear information and smooth navigation: content stuffed with keywords hurts conversion.

On sites subject to E-E-A-T evaluation (experience, expertise, authoritativeness, trustworthiness), especially in YMYL domains (health, finance), this practice can seriously compromise the site's perceived credibility and ranking potential.

Practical impact and recommendations

What should you actually do with this information?

Focus on natural writing and user intent rather than keyword density. Use synonyms, related terms, and develop the semantic field organically.

Favor an approach based on entities and concepts rather than mechanical keyword repetition. Google now understands relationships between concepts, making mechanical repetition obsolete.

Optimize for user satisfaction: content that precisely answers a question, clearly structured, achieves better results than text stuffed with keywords.

How can you audit your site to detect keyword stuffing?

Use keyword density analysis tools to identify problematic pages. A density exceeding 3-4% for a single keyword is generally excessive.

Perform a human reading of your content: if the text seems repetitive or artificial when read, it probably is for the algorithm too. Have real users test your pages.

Analyze behavioral signals in Google Analytics: a high bounce rate and low time on page for well-positioned pages may indicate low-quality content despite excessive optimization.

What mistakes should you absolutely avoid after this clarification?

Don't fall into the opposite extreme by under-optimizing your content. It remains important to include target keywords naturally, especially in structural elements (headings, opening paragraphs).

Avoid keeping old pages stuffed with keywords out of simple negligence. Even if they don't cause blacklisting, they dilute the overall perceived quality of your site and waste crawl budget.

- Write for the user first, optimize for Google second

- Use semantic variations and synonyms naturally

- Maintain keyword density below 2-3% per term

- Structure your content with descriptive and hierarchical headings

- Regularly audit your old content to eliminate over-optimization

- Measure user engagement as a quality indicator

- Prioritize depth and expertise over mechanical repetition

- Test the readability of your texts with real users

💬 Comments (0)

Be the first to comment.