Official statement

What you need to understand

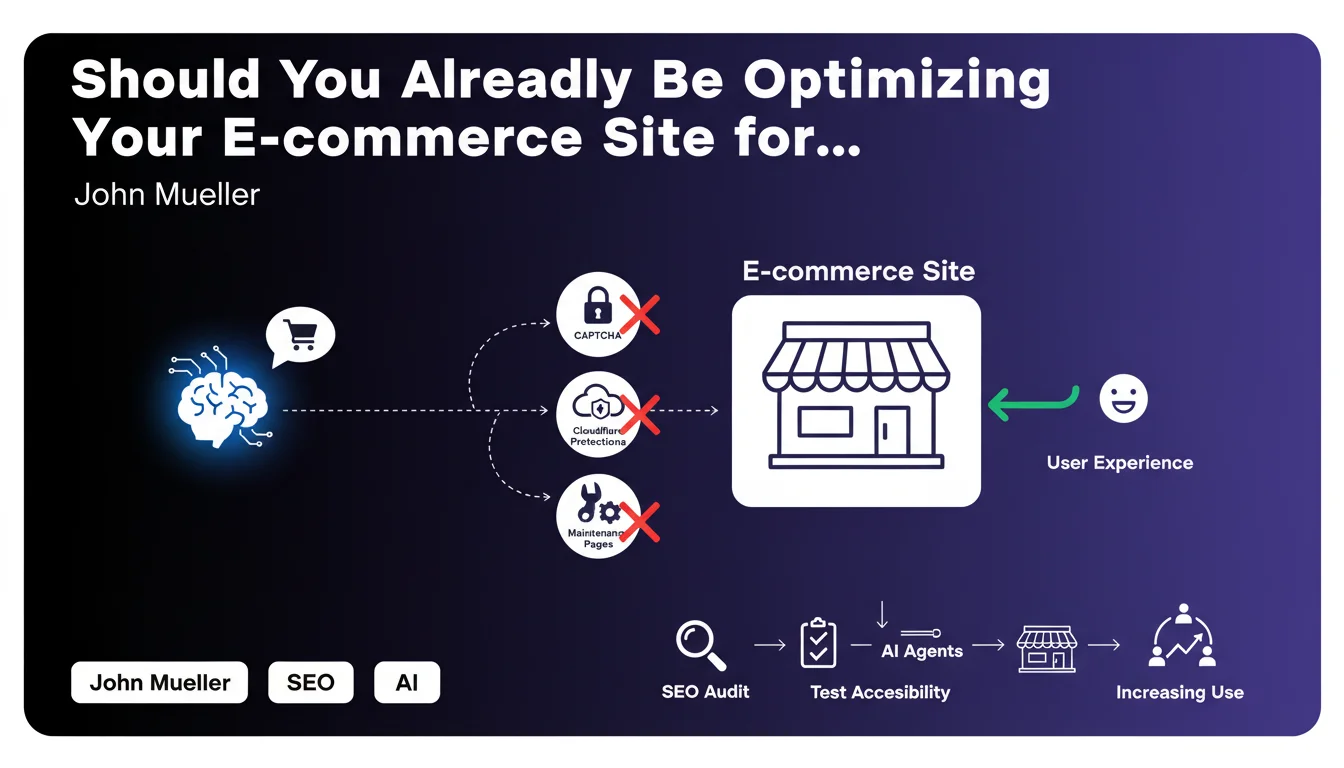

Google is acknowledging a major evolution in online shopping behavior: the growing use of conversational AI agents like ChatGPT to conduct searches and even make purchases. These automated agents browse websites to collect product information, compare prices, and potentially complete transactions.

The identified problem is simple yet critical: many e-commerce sites are unintentionally blocking these AI agents with protection mechanisms designed to combat malicious bots. CAPTCHAs, Cloudflare protections in strict mode, maintenance pages, or anti-bot verifications prevent these agents from accessing content.

This recommendation aligns with Google's ongoing approach focused on real user experience. If a legitimate user (even through an AI agent) cannot access your site, it's a negative signal for both conversion and search rankings.

- AI agents are becoming a new access channel for e-commerce sites

- Traditional anti-bot protections can block these legitimate agents

- AI agent accessibility joins the concerns of UX and technical SEO

- This is about anticipating an emerging trend before it becomes mainstream

SEO Expert opinion

This recommendation is perfectly consistent with the evolution of search toward more conversational and AI-assisted experiences. Google is preparing the ground for an ecosystem where searches no longer happen solely through its interface, but also via third-party agents that aggregate information.

However, nuance is needed: the urgency depends heavily on your sector and audience. For B2B or niche sites, AI agent adoption for purchasing remains marginal. On the other hand, for mass-market e-commerce, particularly in tech, fashion, or electronics sectors, this trend is genuinely accelerating.

The real strategic question is: do you want your content to be aggregated by these AI agents? For some business models, this could cannibalize direct traffic. For others, it's an additional visibility opportunity.

Practical impact and recommendations

- Test your site's accessibility using tools that simulate AI agents (ChatGPT, Claude, etc.) to identify blocking issues

- Audit your Cloudflare rules and other WAFs: switch from "I'm Under Attack" mode to more permissive configurations with whitelisting

- Review CAPTCHA configuration: favor invisible versions or replace them with less intrusive solutions

- Analyze server logs to identify user-agents from legitimate AI agents and specifically authorize their access

- Document maintenance pages: implement appropriate HTTP codes (503) with Retry-After headers rather than total blocks

- Create a bot management policy that differentiates conversational AI agents from malicious scrapers

- Monitor new traffic sources in Analytics to detect the emergence of AI agents among your visitors

- Optimize product data structure (schema.org) to facilitate understanding by AI agents

💬 Comments (0)

Be the first to comment.