Official statement

What you need to understand

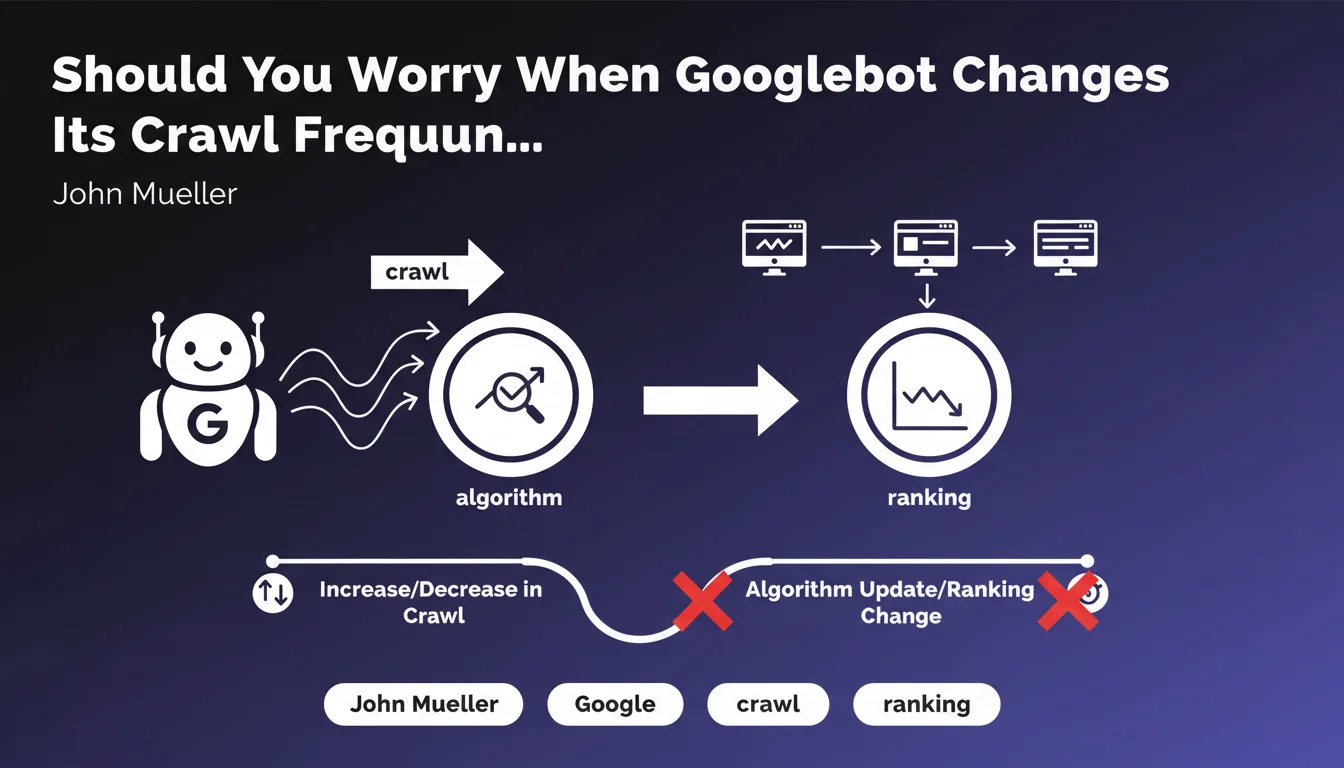

SEO practitioners often closely monitor Googlebot activity variations in their log files, seeking to detect early signs of major algorithmic changes. This statement clarifies a crucial point: there is no direct link between crawl fluctuations and the deployment of algorithm updates.

Concretely, this means that a sudden increase in the number of pages explored by Google does not predict the arrival of a Core Update. Similarly, a crawl decrease does not signal that an algorithmic penalty is in preparation. Both systems operate completely independently.

Crawl variations respond to logics specific to Google's exploration system: changes in the crawl budget allocated to your site, changes in your architecture, addition of new pages, improved server response times, etc. These factors have nothing to do with the algorithmic adjustments that determine page rankings.

- Crawl and ranking are two distinct processes at Google

- An increase in crawl does not guarantee improved rankings

- A decrease in crawl does not necessarily signal a penalty

- Observed correlations between crawl and updates are purely coincidental

- Crawl variations must be analyzed according to their own technical criteria

SEO Expert opinion

This statement is perfectly consistent with field observations from recent years. SEO practitioners indeed tend to over-interpret crawl variations, sometimes creating false alerts when they notice changes in their server logs. The technical reality confirms this independence: the exploration system and the ranking system are architecturally separate at Google.

However, an important nuance deserves to be mentioned. While crawl does not predict updates, a site that is not properly crawled cannot fully benefit from algorithmic improvements. Insufficient crawl can therefore indirectly impact your performance during an update, not because of the algorithm itself, but simply because your new pages or modifications are not discovered quickly enough.

Practical impact and recommendations

- Stop correlating crawl spikes with algorithm update announcements in your reports

- Analyze your server logs to identify the real causes of variations: architecture changes, new content, server performance

- Focus on crawl budget optimization: eliminate low-value pages, optimize your internal linking, reduce redirect chains

- Monitor technical metrics: server response time, HTTP errors, depth of explored pages

- Improve your technical infrastructure to facilitate Googlebot's work: loading time, server stability, up-to-date XML sitemap

- Clearly differentiate in your analyses crawl issues (exploration) from ranking issues (positioning)

- Prioritize indexing of strategic pages via internal linking and sitemaps rather than passively waiting for increased crawl

- Document technical changes made to your site to explain observed crawl variations

Crawl budget optimization and detailed server log analysis require advanced technical expertise and sophisticated analysis tools. These diagnostics often involve cross-referencing multiple data sources (logs, Search Console, simulated crawls) and correctly interpreting Googlebot's behavior according to your specific context. For complex or large-scale sites, support from a specialized SEO agency can prove valuable to implement a truly effective crawl optimization strategy tailored to your business challenges.

💬 Comments (0)

Be the first to comment.