Official statement

What you need to understand

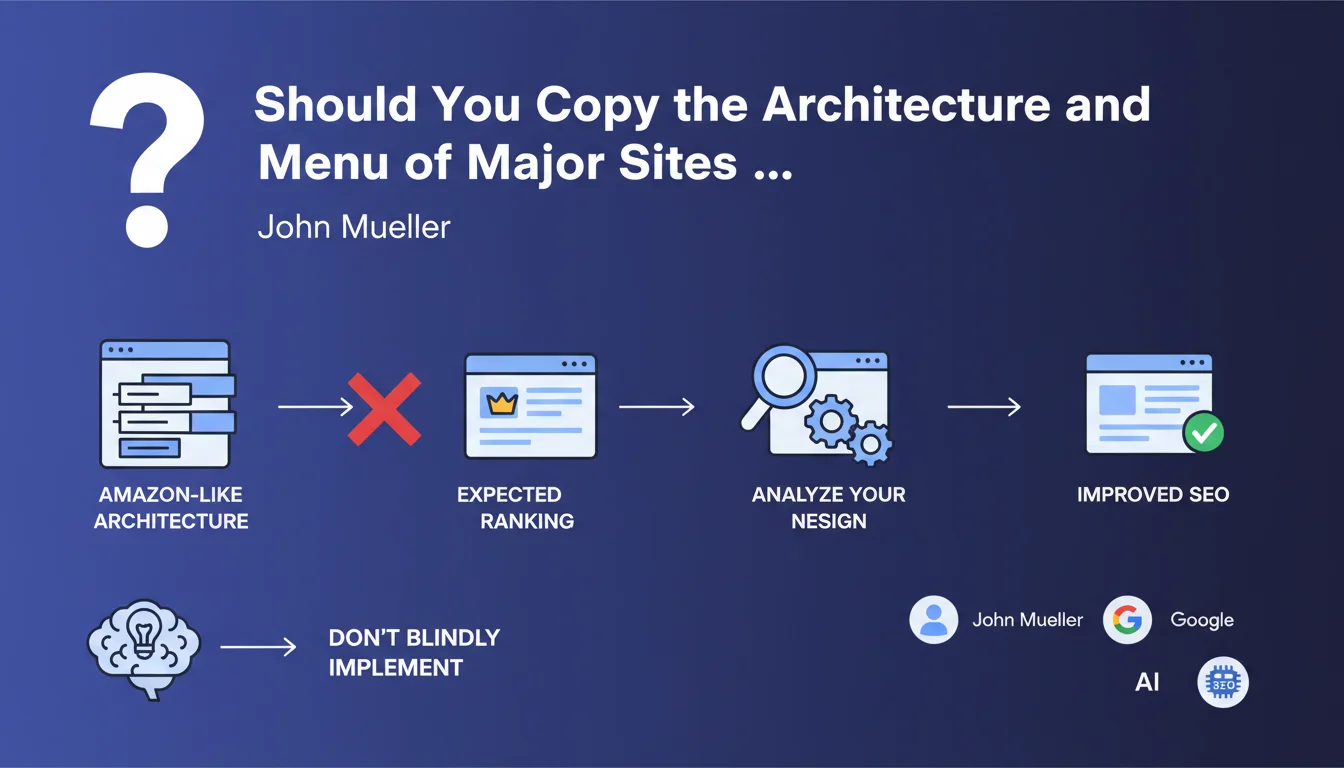

Why is copying Amazon or other web giants a bad SEO strategy?

John Mueller's statement highlights a common mistake: believing that reproducing the technical choices of web behemoths will guarantee the same success. Major e-commerce sites like Amazon have colossal domain authority, decades of history, and millions of natural backlinks.

Their complex architecture (3-level menus, deep structures) works despite certain technical weaknesses, not because of them. A small site that copies these structures inherits the flaws without benefiting from the advantages linked to Amazon's massive authority.

What actually makes large sites rank?

Web giants rank thanks to exceptional trust signals: brand awareness, volume of branded searches, massive user signals, and a phenomenal amount of indexed content. Their technical architecture is just one factor among others.

A small or medium-sized site must optimize every aspect because it doesn't have these compensatory signals. An unsuitable structure will directly penalize its crawl budget, internal linking, and user experience.

What are the concrete risks of this copycat approach?

Blindly copying exposes you to several major dangers: dilution of crawl budget across unnecessary depth levels, increased loading time due to complex menus, user confusion when faced with navigation unsuited to the actual product volume.

- Loss of crawl budget: Google crawls a site with too many navigation levels less efficiently

- Degraded user experience: a complex menu confuses users on a limited catalog

- Negative quality signal: copying reveals a lack of proper strategic thinking

- SEO juice dilution: internal PageRank disperses in an overly deep structure

- Problematic indexing: some important pages may remain too deep to be crawled regularly

SEO Expert opinion

Is Mueller's recommendation consistent with practices observed in the field?

Absolutely. After 15 years of SEO audits, I observe that the most successful sites are those that have developed custom architecture, tailored to their catalog and specific audience. Copy attempts systematically fail.

Tools like Screaming Frog or Oncrawl reveal that "copycat" sites show excessive depth rates, orphan pages, and uneven internal PageRank distribution. Conversely, successful sites have thought through their own categorization logic.

What nuances should be added to Google's position?

While you shouldn't copy blindly, it would be foolish to completely ignore best practices observed among leaders. The challenge is distinguishing between universal principles and specific implementations.

Principles to remember: facilitate access to important pages within 3 clicks maximum, use consistent breadcrumbs, optimize internal linking to strategic pages. But the concrete implementation (number of levels, menu type) must stem from your unique context.

When can you draw inspiration from major sites without risk?

Inspiration becomes relevant when you analyze the underlying principles rather than the visible implementation. For example, Amazon excels in faceted filters, customer review management, and product page optimization.

These elements, adapted to your scale, can improve your SEO. But copying their 3-level menu when you have 200 products versus their millions makes no sense. Context determines structure, not the other way around.

Practical impact and recommendations

How do you define the ideal architecture for your own site?

Start with an audit of your catalog: number of products/services, natural categorization logic, queries actually typed by your customers. Then analyze user behavior via Google Analytics and heatmaps.

Your structure must stem from this analysis, not from copying. For a site with fewer than 1000 pages, a 2-level architecture is generally sufficient. Between 1000 and 10000 pages, 3 levels maximum. Beyond that, consider multiple entry points (thematic landing pages, faceted filters).

Test systematically: crawl data will show whether your important pages are easily accessible, and Google Search Console will reveal pages discovered but not indexed.

What concrete mistakes must absolutely be avoided?

The first mistake is creating empty or nearly empty navigation levels "to do like the big sites." Each level must have a clear business and SEO justification. If a category contains fewer than 5 products, it probably doesn't belong.

Second pitfall: neglecting contextual internal linking in favor of a complex menu. Links within content (complementary products, buying guides) transmit PageRank better than an overloaded mega-menu.

- Map your actual inventory before defining architecture

- Verify that each important page is accessible within maximum 3 clicks from the homepage

- Analyze crawl depth with Screaming Frog or equivalent

- Test menu user experience on mobile as a priority

- Avoid complex dropdown menus that dilute crawl budget

- Prioritize clarity and simplicity over menu exhaustiveness

- Use breadcrumbs to structure hierarchy rather than an overloaded menu

- Monitor your pages' indexation rate in Google Search Console

How can you verify that your current structure is optimal?

Use Search Console to identify pages discovered but not indexed: this is often a sign of an overly deep structure. Also analyze the click-through rate from category pages to product pages.

A server log audit will reveal which pages Google actually crawls and how frequently. If your strategic pages aren't crawled regularly, your architecture is problematic.

💬 Comments (0)

Be the first to comment.