Official statement

What you need to understand

Why are test sites so frequently indexed by Google?

The phenomenon of accidental indexing of pre-production environments is extremely common. It occurs when Google's robots discover and index URLs that were not intended to be public.

These situations typically happen when technical teams forget to implement access barriers or when an external link accidentally points to the development environment. The consequences can be serious: duplicate content, unfinished versions visible publicly, or worse, sensitive data exposed.

What methods does Google recommend for protecting a test site?

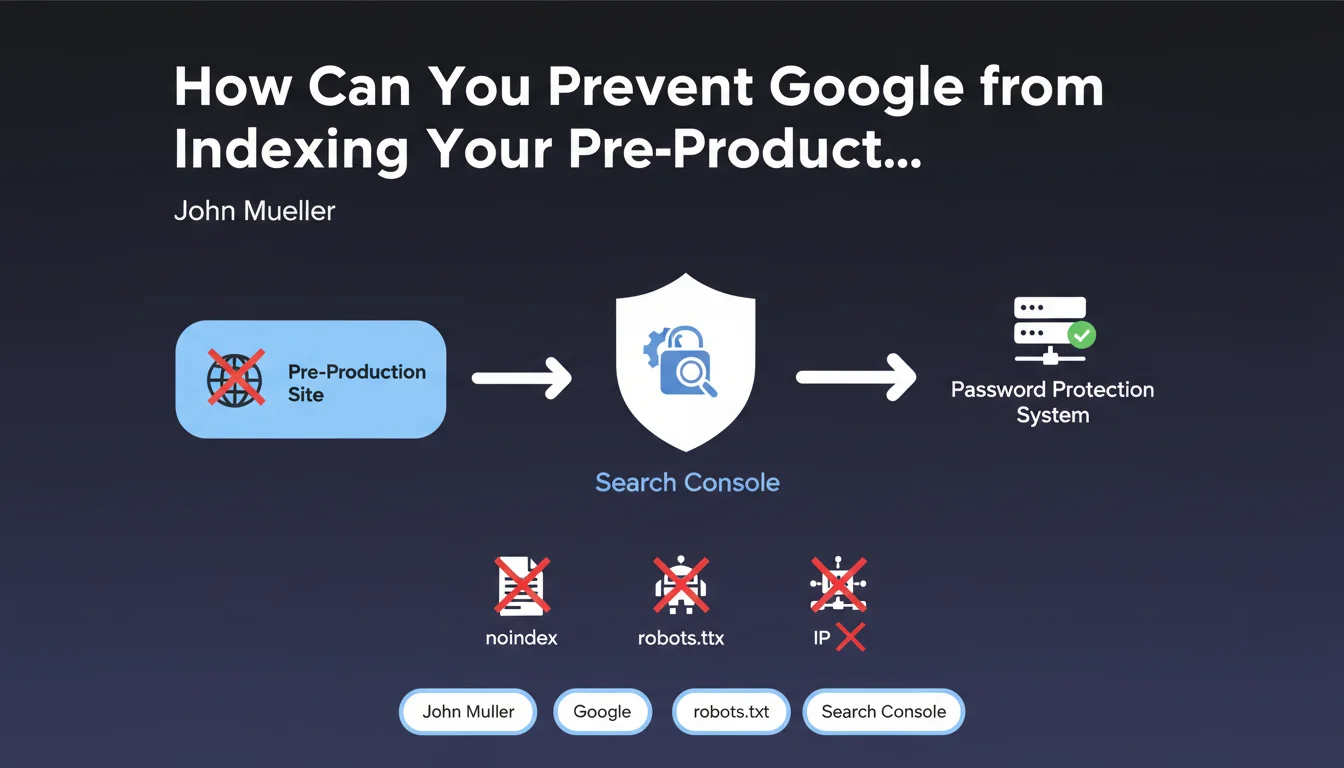

According to this official statement, HTTP password protection is the most reliable method. This approach completely prevents robots from accessing the content, unlike noindex tags which require the robot to first access the page.

IP address whitelisting also constitutes a robust solution, limiting access only to authorized collaborators. These methods are preferable to robots.txt or noindex meta robots tags which can be bypassed or misconfigured.

What should you do if your test site is already indexed?

Search Console becomes your best ally in this emergency situation. It allows you to quickly submit URL removal requests to remove unwanted pages from the index.

However, this action must absolutely be accompanied by the implementation of permanent protection (password or IP whitelisting) to avoid immediate reindexing. Removal via Search Console is only a temporary solution lasting 6 months.

- HTTP password protection: the safest method recommended by Google

- IP whitelisting: robust alternative to limit access to authorized teams

- Search Console: rapid removal tool in case of accidental indexing

- Noindex tags and robots.txt are less reliable for development environments

- Test site indexation can create duplicate content penalizing the production site

SEO Expert opinion

Does this recommendation align with practices observed in the field?

Absolutely, and it's actually one of the most frequent problems I encounter in SEO audits. The number of indexed pre-production sites is indeed considerable, often discovered too late during in-depth analyses.

Google's preference for HTTP authentication over noindex directives makes perfect sense. A robots.txt file can be misconfigured, a noindex tag can be forgotten on certain pages, but authentication physically prevents access. It's a much more robust and reliable barrier.

What important nuances should be added to this advice?

Search Console is only effective if you have already verified ownership of the domain in question. For a test site on a temporary subdomain or domain, you must first complete this verification, which can take time.

Moreover, removal via Search Console is temporary (approximately 6 months). Without permanent protection, pages will be automatically reindexed. It's therefore an emergency solution that must necessarily be accompanied by permanent environment security.

In what cases does this approach require adaptations?

For large organizations with multiple environments (dev, staging, UAT, pre-prod), management becomes complex. You must then implement a global policy with standardized procedures and automated checks.

Sites using CDNs or caching systems must be particularly vigilant. HTTP authentication must be configured at the right level to avoid being bypassed. Similarly, modern JavaScript applications (SPAs) require special attention because authentication must block access before any rendering.

Practical impact and recommendations

What concrete actions should be implemented immediately?

The first step consists of auditing all your development and pre-production environments. Perform a Google search with the "site:" operator on each test domain or subdomain to verify what is currently indexed.

If you discover indexed pages, act quickly: verify ownership in Search Console if not already done, then submit removal requests for each affected URL or directory. Then, immediately implement protection through HTTP authentication.

How do you configure effective and sustainable protection?

HTTP Basic authentication (htpasswd on Apache/Nginx) remains the simplest and most universal solution. It blocks access at the web server level, even before content is generated or served.

For teams that need frequent access, IP whitelisting combined with a corporate VPN offers an excellent compromise between security and ease of use. This configuration prevents all external access while facilitating collaborators' work.

What critical mistakes must you absolutely avoid?

Never rely solely on noindex tags or robots.txt to protect a development environment. These methods are too fragile and can be bypassed or misconfigured during deployment.

Also avoid using predictable subdomains (dev.yoursite.com, test.yoursite.com) without protection. Robots and malicious actors systematically test these patterns. Favor less obvious names combined with strong authentication.

- Systematically audit all non-production environments with the "site:" operator in Google

- Verify ownership of all your domains and subdomains in Search Console

- Implement HTTP authentication (htpasswd) on all development environments

- Configure IP whitelisting as an alternative or complementary protection

- Submit removal requests for all accidentally indexed URLs

- Establish a deployment checklist including verification of protections

- Train technical teams on indexation risks of test environments

- Set up automated alerts to detect any unwanted indexation

- Document protection procedures in an internal guide accessible to everyone

- Conduct quarterly audits to verify that all protections are still active

💬 Comments (0)

Be the first to comment.