Official statement

At least the message has the merit of being clear.

What you need to understand

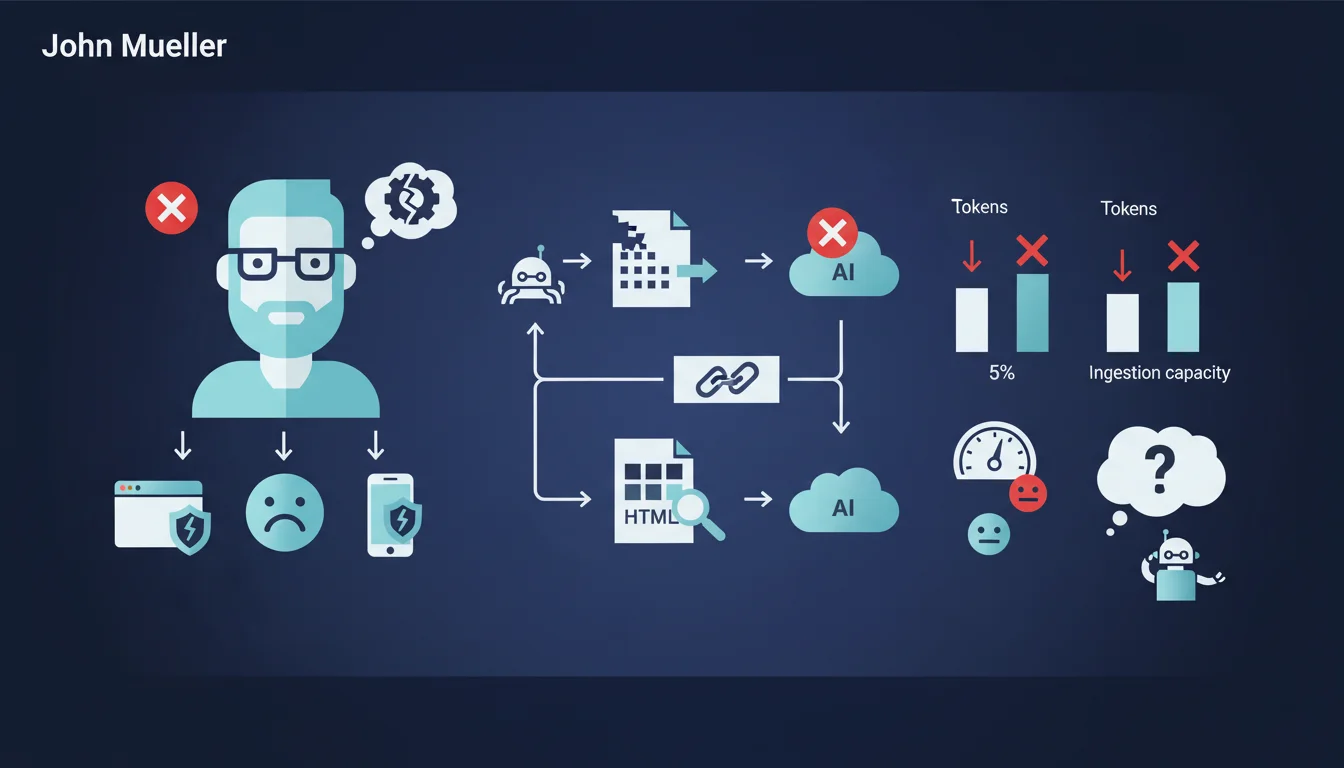

A new practice is emerging in the developer community: detecting AI bots (like those powering ChatGPT or Perplexity) and serving them content in raw Markdown format rather than the usual complete HTML.

The idea behind this technique? Optimize content ingestion by RAG systems (Retrieval-Augmented Generation) by drastically reducing token usage, sometimes up to 95%. Fewer HTML tags means less data for these language models to process.

Google, through John Mueller, has categorically rejected this approach, even calling it a stupid idea. His position is unequivocal: this AI cloaking technique provides no value and should be avoided.

- Technique concerned: serving Markdown to AI bots instead of standard HTML

- Intended objective: reduce token consumption and improve AI indexing

- Google's position: total opposition, considers this practice useless or even counterproductive

- Implication: no need to create alternative content versions for AI crawlers

SEO Expert opinion

Google's position is perfectly consistent with its fundamental principles against cloaking. Serving different content based on user-agent has always been a risky practice, even if in this specific case it involves AI bots and not Googlebot.

It must be understood that modern language models are perfectly capable of parsing HTML and extracting relevant content from it. Adding a detection and conversion layer introduces technical complexity for virtually zero real benefit. Mueller's irony about images underscores the absurdity of the logic taken to its extreme.

Furthermore, web standards exist for a reason. Well-structured, semantic HTML with appropriate tags (schema.org, hn tags, etc.) remains the best way to communicate information, whether for Google, generative AI, or users with assistive technologies.

Practical impact and recommendations

- Do not create Markdown or simplified versions specific to AI bots

- Avoid any form of cloaking based on user-agent detection, even for non-Google bots

- Optimize your existing HTML: clear semantic structure, hierarchical hn tags, schema.org markup

- Clean up your code: remove superfluous HTML, unnecessary divs, non-essential scripts for initial rendering

- Prioritize performance: a fast and lightweight site benefits all crawlers, AI included

- Clearly document your content with structured metadata (JSON-LD, Open Graph)

- Test your rendering with different user-agents to ensure all receive equivalent content

- If you have already implemented this technique, consider removing it to avoid future complications

These technical optimizations, while conceptually simple, often require deep expertise in web architecture and a thorough understanding of current SEO challenges. Implementing an optimal HTML structure while maintaining modern site functionalities can prove delicate. For businesses looking to maximize their visibility on both traditional search engines and emerging AI platforms, guidance from a specialized SEO agency provides a comprehensive technical audit and personalized optimization strategy, tailored to the specificities of each project.

💬 Comments (0)

Be the first to comment.